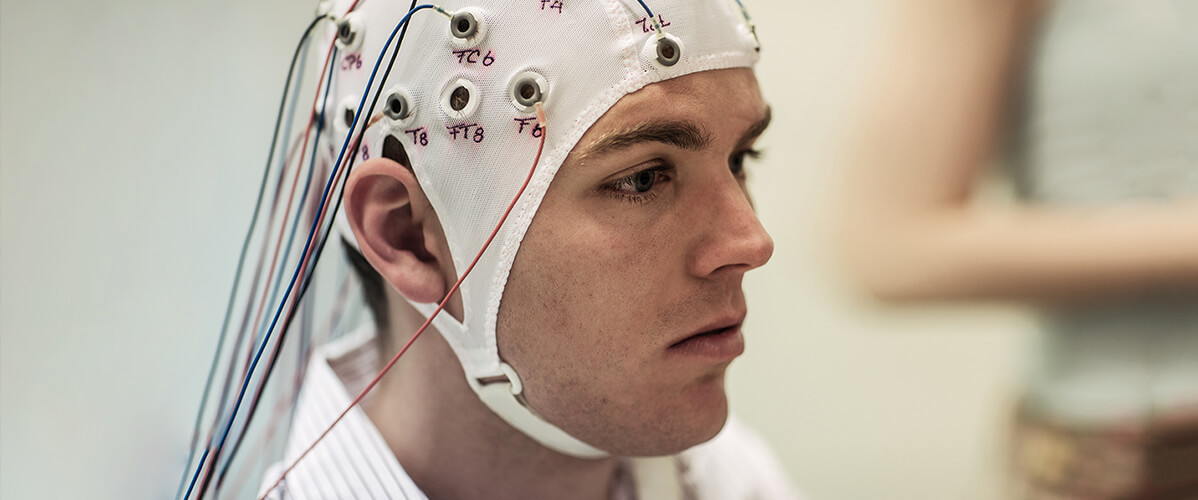

Brain-machine interfaces (BMI) are direct communication devices between the brain and an external device such as an electronic system, a computer or a tablet, etc., allowing a person to act through thought. How? First, brain activity is recorded, usually through electrodes placed on the skull, that is to say, the electrical signals emitted when focusing attention on a thought or a specific action. Next, software analyses and interprets these signals and converts them into commands for the machine.

The first work on the BMI began in the 1970s in the University of California in Los Angeles (UCLA). Research progressed rapidly from the 1990s, especially in the field of health with the idea of “fixing” human functions. Tetraplegic individuals could thus control an exoskeleton through the thought of getting up and moving; amputees could control their bionic prosthesis; patients of the syndrome of confinement could talk to a computer and write through thought…

The best known example is probably Matthew Nagle, a former football star who became a quadriplegic after being knifed. In 2004, he was the first human to use BMI to restore certain functions lost due to his paralysis.

BrainGate was implanted into him, a system composed of one hundred electrodes known as “invasive”, that is to say connected directly to the cerebral cortex, developed by Cyberkinetic the company in collaboration with Brown University’s neuroscience department. This allowed Matthew Nagle to control a computer cursor and a robotic arm to control the TV and lighting, or to read his emails and play Pong.

Thanks to the many advances made in recent years, a BMI not only restores lost faculties (movement, hearing and sight), but could also soon expand.

Another promising area of BMI application is in gaming. The promise? To allow the player to move and interact with virtual environments, control actions in a game by thinking, or to adapt the content of the game itself through the mind of the user.

Since the early 2000s, researchers have been testing BMI technologies in a playful video context. Although some companies already market consumer products based on electroencephalography (see slideshow), research remains experimental.

Among the most interesting projects is OpenViBE2. This collaborative research project conducted from 2009 to 2013 by academic laboratories (such as INRIA), industrial video gamers including Ubisoft, and specialists of virtual reality (Leprechaun, Clarity), focuses on the potential of brain-computer interfaces (BCI) in the field of video games with an original approach.

BCIs are apprehended not as substitutes for traditional gestural interfaces (gamepad, joystick, mouse, etc.), but rather as “a way to play in a new way, complementary to traditional techniques.

OpenViBE2 has allowed researchers to realise important scientific advances in neuroscience, in the processing of electrical brain signals, or human-machine interfaces and virtual reality, and invent new concepts to “interact with more video games in a more original and effective way”. For example, like a “Multiplayer” brain-computer interface, which allows two players to play together or against each other in a game of simplified soccer, or the automatic adaptation of the virtual world to the mental state of the player…