The Tactile Facile app brings together many years of research into human-machine interaction for enhanced accessibility on the phone interface.

The issue of disability and accessibility in digital services is seldom addressed in full. Whether visual, auditory, motor-based, or linked to understanding, the needs of users are very diverse. Usually, technical aids added to standard interfaces can meet these needs, though making users adopt shortcuts and complex additional manipulations: this is, for instance, the case with eye control and sign language avatars.

Additionally, today’s standard touch-based interactions are quite simple: a tap, a swipe, or a long press. However, they often cause manipulation errors: barely touching the screen can result in it “coming to life”. They do not offer solutions to people who have difficulty using their fingers to point. Nor do they provide a simple way to hear the functions and content being displayed, though this would be very useful for people with reading difficulties.

Finding the best compromise between constraints and needs

Orange and its research, design, development, marketing and human resources teams have been working for around ten years now to satisfy these diverse needs in terms of the telephone interface and to provide a simple accessibility solution. The joint work carried out by Eric Petit, a research engineer in human-machine gestural interaction, and Denis Chêne, an ergonomics researcher in human-machine interaction, both working with Orange, has been key. After researching their respective specialities, they pooled their work so as to design a technology that takes into account both the technical constraints expressed on the one hand, and the constraints inherent in the user’s needs expressed on the other.

Eric Petit has been working on gestural interaction since the 2000s. He gradually moved towards accessibility issues as applied to touch-based interfaces.

For his part, Denis Chêne, an ergonomics specialist, is interested in ways to adapt technology to humans. In order to tackle the accessibility of user interfaces, he focused on the subject of multimodality. Through discovering the extent and variety of needs in this area, he found that these were hardly ever taken into account in the technical solutions put forward up to that point.

By drawing together their long-term research goals, Eric Petit and Denis Chêne have made the Tactile Facile application possible. Eric Petit and his team first developed DGIL (Dynamic Gesture Interaction Layer technology), a powerful touch-based gesture recognition engine able to recognise symbolic gestures consisting of one or more strokes. For instance, with DGIL it is possible to draw a heart on a screen in order to access a function more quickly, rather than having to click on a graphic. A DGIL extension was then created which resulted in a system able to manage a multitude of gestures and analyse them in real time.

Lastly, in order to control event programming and the coupling between events and commands, another technical element was developed: AEvent. “This component adds a lot of flexibility to the software architecture,” Eric Petit emphasises. Such flexibility is needed to create multi-profile interfaces.”

At the same time, Denis Chêne analysed tests undertaken by Orange employees with disabilities for several years. With his team, he was able to distinguish three major types of diversity from the standpoint of the telephone interface:

● diversity of perception (visual, auditory, touch-based, a mix of all three, etc.),

● diversity of understanding (novices/experts among people without disabilities or among people with specific mental constraints),

● and lastly, diversity of manipulation (ability to use fingers to point or not, use of elbows to touch an interface for some kinds of motor disability, etc.).

“Identifying this raises the issue of multimodality and the myriad of possible combinations that it generates”, explains Denis Chêne.

The challenge then turned to finding a way to consider all these points of view simultaneously. At this point, Eric Petit and Denis Chêne pooled their work to come up with a universal design approach, known as Menu Design for All (Menu DfA), aligning this diversity of perception, understanding and manipulation. This innovation brings together information presentation components that can be manipulated in different logic frameworks. The entire design principle of Menu DfA is to be at least able to handle the most limiting situations. Eric Petit and Denis Chêne have determined that, in terms of human-machine interaction, the list is the easiest way to reconstruct any complex object. The toolbar of a simplified word processor in the form of a list of possible choices, for instance, makes it very easy to use. The most complex problems relating to the interaction profile can thus be solved thanks to the list, and more generally to the Menu DfA.

An app better suited to each user’s profile

Eric Petit and Denis Chêne’s project to devise a touch-based telephone interface that is accessible to all delighted and won over the Accessibility Division at Orange, which was responsible for building the offer and managing the multi-channel distribution. This product is part of an ethnographic and design for all approach.

All these years of work have thus resulted in the Tactile Facile solution: a free application available on Orange mobile devices version 6 Android in France, Spain and soon Romania. Three European countries will follow in early 2019.

It allows customers to easily access the basic functions of a phone: making calls, sending texts, and accessing apps. The actions are simple and adapted to different user profiles.

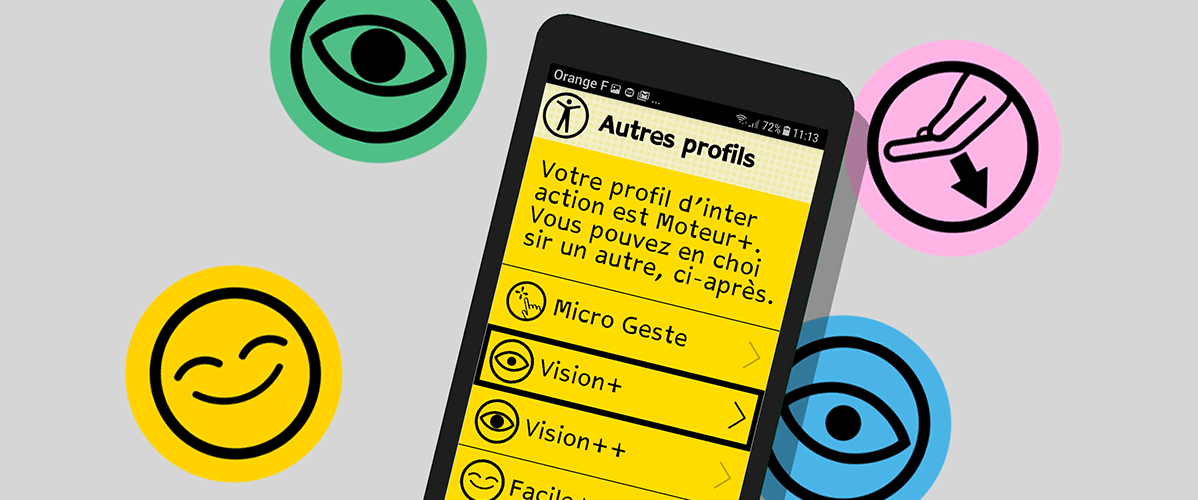

Thanks to the Menu DfA technology, five interaction profiles were created (this list is not definitive) with a defined preset for given types of needs: Easy+ for beginners, Vision+ and Vision++ for the visually impaired, Motor+ for people with manipulation difficulties, and Micro-gesture for people with motor difficulties but who can point accurately.

The innovative nature of this new app lies in its multi-profile approach, which provides several ways of interacting with the interface, as well as a number of interaction principles such as “micro-gesture” for people with motor disabilities who can only make small browsing gestures. Thanks to micro-gesture, the focus can be effortlessly moved with the help of micro-movements of your finger, with no need to point or lift: this is known as “de-colocalised pointing.”In this case, the sensitivity of interaction can also be adjusted by the user.

Security is also one of the app’s innovations: hence a user can confirm with a long click rather than a single tap which is sometimes performed unintentionally.

Within the Tactile Facile application itself, the “verbosity” parameter appears: vocalisation is triggered either manually by tapping, or automatically by moving the focus to a new item. Vocalised content is transcribed as required: for instance, if the user wants to make a call, the voice transcription will be at least “Call” or, for a higher level of verbosity, “Call your friends or reach your contacts”.

Each action performed on the interface is accompanied by feedback for the user: vibration under the fingers, vocalisation by the interface following the action, or even while it is being performed for confirmation.

The “motor” profiles are still under development, while the “literacy” profiles are at the test phase and the profiles for hearing and cognitive impairments are at the research phase.

Gema Solana Díaz , Disability Product Marketing Director at Orange emphasises that:“Today, Tactile Facile offers numerous solutions, and research work is not yet at an end. The app is scalable and we hope to launch various profiles adapted to each user in the future.”