How can this be achieved? How should this digital twin be made? What are the theoretical and technological challenges? But first, what are the basic elements for understanding this problem and what is the state of the art?

“The BIM, or a Building Information Model, includes not only information about pure geometry and placement of geometric elements, but also provides descriptive information using semantics.”

The architecture of buildings, from paper to digital

The digital twin that one would like to see alive, dynamic, extensible and interactive is a relatively recent concept in the field of building architecture.

History or pre-history in architecture begins with sketches or freehand drawings. Later, in the Middle Ages, we find the drawing board and the famous Rotrings, well known to older readers, which were used for a long time. Then computers arrived, spurring a significant modern-era break with former ways. Drafting and editing a plan became easier, faster and more accurate. From the 2D (like the black and white of our TVs), we quickly moved to 3D (to colour). In the early days, 3D was just a graphic reflection of a building.

A human seeing this type of plan can easily assimilate it because his brain recognises the different elements. By contrast, for a computer, and potentially Artificial Intelligence (AI), a wall or any other element of the building was only a graphic representation, as would be a picture taken with a digital camera, which today is most often still only a point cloud (This article will not deal with object recognition by AI.)

To keep on track and make it easier for you to understand, imagine that this photo contains not only dots but also hidden text elements that describe its contents. These elements convey that in a certain area of an image, there is a bicycle with a set of properties (maybe brand, model or other feature), in another area some element of urban furniture, a lamppost with set properties, for example, and between these two elements a relationship like “the bicycle – is attached – to the lamppost” or “the bicycle – is under – the lamppost”. This is called semantic information that allows search with keywords and reasoning about existing knowledge (information) to potentially create new knowledge.

To return to the topic of this article, it is time to introduce the concept of BIM:[2] “Building Information Model”. The BIM incorporates not only pure geometry and the placement of geometry elements but also descriptive information with semantics. This information indicates that there is a site on which a building is located, which contains floors, rooms, corridors, stairs etc., which contain fixture and fittings elements etc., potentially even the wires that connect a plug to a switch or any bit of information needed by any trade. In addition to surface area, there are of course other relationships like adjacency (placing a certain corridor next to specific rooms). As before, these various building elements have properties such as a unique identifier and a name for the part, and are associated by relationships. With this, the BIM can go much further than a simple plan, as it contains information on construction methods, deadlines, costs, life simulations of the work and maintenance operations. For the BIM, this is called nD (4D, 5D, 6D or 7Dimensions) depending on the number of information types it contains.

Today, alongside the arrival of the communicating objects that are installed in buildings, comes the stage which will aim to use the BIM throughout the life of the building, notably to interact with it. The term “Operational BIM” is emerging.

Operating buildings using digital twins

To meet the operating needs of buildings – whether traditional (e.g. energy management) or new ones (e.g. pro-active maintenance), the BIM should reflect the physical reality of the building over time but also allow supervision and interaction with the building through its Digital Twin.[3] Digital Twin is a digital and faithful representation of the physical reality of a system, in this case, a building.

How should this digital twin be made?

An ontology [4] defines entities (like a room), its properties (say, the room name), and its relationships (for example, a relation “isIn” with a floor entity). An ontology is a sort of common vocabulary, a set of definitions of entities and relationships between those entities that apply to a domain.

An ontology generally covers a particular field (e.g. construction, biology, medicine etc.), which does not mean that one cannot have several ontologies covering the same or related fields, in a different way depending on the use conceived by its designers. Consequently, there are several ontologies covering the building domain. These can be combined or even aligned.[5] Alignment is to indicate that such a concept defined in an ontology x is semantically identical to a concept defined in ontology y, even if these concepts do not have the same name. This means that all users can visualise things in a way that best suits their job in the building.

It is easy to understand that there will be no unified and comprehensive ontology covering all the elements that describe the macrocosm as a whole. The same is true on a much smaller scale: the building. Several ontologies are needed to describe the building domain as comprehensively as possible, its many aspects, subdomains and related fields.

This is the goal of the “Thing in the future” research platform (Thing in)[6] developed by Orange Research. “Thing in” can be used to graph – with nodes and relationships – complex systems and subsystems such as buildings, cities, factories and anything else. You can use “Thing in” to connect semantic information based on ontologies with the graph, which can be added, combined and related to extend the representation capabilities of “Thing in”.

Unlike specialised BIM platforms, which only manage the basic and standardised BIM information model, “Thing in” is able to manage different information models (ontologies) that can be combined by creating new relationships with BIM entities.

The Industry Foundation Classes (IFC) model [7] is the standardised semantic model used for the BIM. It is one of the models in “Thing in’s” ontology form and it can be connected with others also covering the field in a different and complementary way. Other specific domain ontologies will be used for entities in a building that are not covered, like the building intrusion security system. Many trades involved in the building have their own tools and data. These data should be represented in “Thing in” with appropriate ontology.

By using ontologies, semantics and “Thing in”, it becomes possible to meet the challenge of the Digital Twin of the building in all its dimensions and to extend it via new relationships and new knowledge.

Now that a building has its twin, the next step – the main challenge – is to make this Digital Twin interactive in a way that it can assimilate the Operational BIM concept.

Towards an interactive Digital Twin

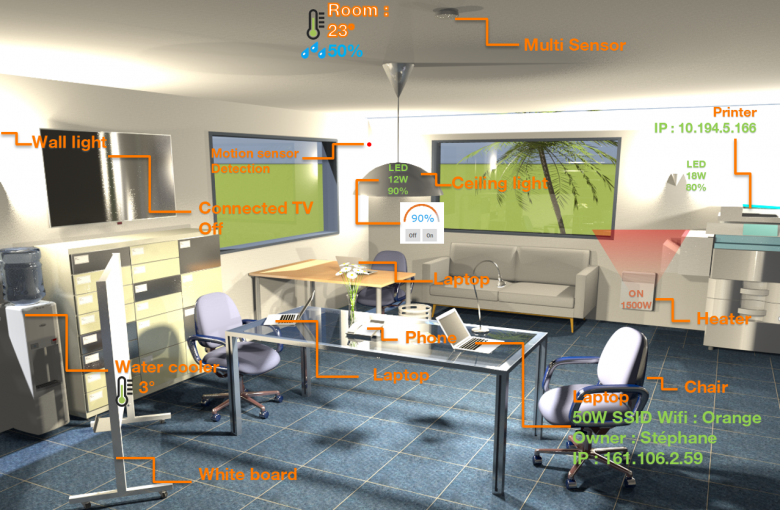

Through a representation of the interactive digital twin, the occupants of the building and its managers can visualise its condition, interact with it by introducing more and more communicating objects as well as new potential services through multiple vectors, such as screens, virtual reality helmets, glasses etc.

The first level of interaction is easy to accomplish and simply involves creating or modifying objects, properties or relationships manually.

For objects in the real world, and of course in the Digital Twin, this is not as simple as it seems. While it is easy to imagine this interactive Digital Twin with context menus and nice graphic elements of interactions (widget, gadget etc.), it seems difficult to prevent every Digital Twin in a building from becoming a specific application by itself, implying that any modification/improvement of interaction would require new developments. But this is obviously not desirable.

A second level of interaction could be the addition of contextual menus to objects already represented in the operational BIM, as well as the display of events generated by connected objects and applications that interact with them. The modelling capabilities of “Thing in” described above will associate objects with a particular item of information, called “access modality”,[8] which will describe the technical means of interaction, either directly with the object or through its application or management platform. These modalities could be used to automatically generate this type of interaction.

However, even this is not enough. For example, when you next double-click in the graphic representation of the Digital Twin on a lamp, the lamp actually turns on or off, but it also needs to be reflected graphically on the Digital Twin or to be broadcast to all the objects in the room. For example, to be as true as possible to physical reality, one could take into account the light power in terms of Lux and the colour temperature of this lamp. The interaction here could alter the brightness and colour of objects illuminated by this lamp. Thus a distant zoom level enables a clear/visible visualisation of the lighting status of the room.

Sometimes the interaction can be a reflection of a movement (an opening element that changes from open to closed). Other times, interactions are needed to indicate a reality invisible to the eye in the real world (visualise graphically the temperature of a room or objects through colours). Or, sometimes, one wants to highlight a reality of the physical world in an augmented form. For example, the presence detection of an infra-red sensor will be indicated not only by a red dot of the physical sensor (as often in reality) but also by a halo of concentric circles animated in front of the sensor corresponding to its angle and range characteristics. Again, the aim is to make the detection of this example more visible on a more or less wide view of the building.

Once again, any specific computer development of interaction should be avoided. What we see is that not only do we need the forms of access to these objects, but we also need an ontology capable of describing a user’s interactions with a graphical user interface (in a broad sense, any human-machine interface) and the services that these interactions render. This involves an ontology that would define the modalities of interactions.

Potentially for the same state change, one could have a choice between several forms of graphical (behaviour) interaction: for example, one reflecting physical reality and the other reflecting an augmented physical reality.

Another aspect: Placement…

To keep the Digital Twin of the building up to date in relation to physical reality, the issue of the location and placement of objects becomes crucial. Imagine that the description elements constituting the intrusion security system comes from a data field external to the original BIM of the building and then is “injected” into “Thing in” to be associated with and to complement the digital twin already present. For example, it may be known that a presence detector is located in each room (detector D1 in room R1 etc.) but its precise position in the Digital Twin may be unknown. Accordingly, it would be useful to describe automatic placement rules (for example, positioning relative to the door and the floor of a given room), which could retroactively fill out the Digital Twin while addressing a use case. Any existing exceptions where overly numerous could not be incorporated into the rules; they would have to be carried out manually. However, this example shows that the use of placement rules can make things a lot more efficient.

A word about the technical side…

There are many technical barriers regarding graphical representation. Despite a multitude of high-performance graphics standards for 3D display, BIM formats do not convert to these directly. Sometimes this requires multiple steps and involves a potentially huge loss of information, mainly semantic data.

The established and standardised ISO standards for the exchange of BIM are still IFC3x3 [9] and IFC4.[10]

“Fat clients” (programmes rather than a web browser) exist to directly process both formats. However, there is no thin client equivalent for a web browser despite the existence of a wide range of graphic libraries.

The existing ontologies for different versions of IFC are advantageous because not only do they prevent primarily semantic information losses during the injection phase in BIMs’ “Thing in”, which are designed with CAD tools, but they can be used to submit queries and extend description capabilities.

However, the downside of storage in this form with a potential increase in storage volume may be an impact on some areas of performance. For example, the IFC2x3 or IFC4 ontologies include graphical data because they are the ontological reflection of the formats of IFC2x3 and IFC4 and while this may not be the type of graphical data we think of when dealing with semantics, in some cases, this data would be of interest in determining how to position objects. This formalism did not appear to be the most effective for this use case.

To address that requirement and also the possibility of importing graphic avatars from external sources like a 3D model library, a compromise within “Thing in” could be a combination of purely semantic data and a powerful graphical format for each element of the Digital Twin with a graphical representation (e.g. walls). The advantage of that would be to rapidly have available compact graphics data that avoid conversion into a recognised standard format.

Finally, the use of external 3D object libraries to represent avatars would be a possibility, but here too many technical questions arise threatening a guarantee of high performance levels of the visualisation and navigation interfaces.

Conclusion

By using the example of the building, this article describes a field of application amongst others in the “Thing in” research platform, which enables for demonstrating objects in the physical world through its graph and its semantic representations (ontologies) and their relationships that make reasoning possible beyond that level.

In the field of Smart Building,[11] Orange can deploy “Thing in” to play a pivotal role in translating to various targeted formats (e.g., traditional web applications, virtual reality or augmented reality interface etc.) by standardising the integration of these interactions, by simplifying and reducing their development costs, benefitting from improved interaction with Digital Twins and meeting the expectations of future uses.

To this end, the scientific barriers that Orange’s research is working to remove involve a proposal for a semantic and standardised description of the forms of interaction between a user and a human-machine interface of a Digital Twin, supplemented by a system for describing rules for the automatic placement of objects in the Digital Twin.

The technical barriers will be located at the tools and libraries level to find a powerful graphical display on thin clients that can be used on any type of device through a web browser and in achieving performance in extracting and processing data from the Digital Twin.

Digital Twins will become a central element in the management of complex buildings and systems. The results of this research are intended to augment Smart Building solutions offered by Orange Business Services.

Reading to learn more

https://hellofuture.orange.com/fr/le-sens-du-sens-les-ontologies-ce-nest-pas-que-de-la-philosophie/

https://hellofuture.orange.com/fr/thingin-la-plateforme-du-graphe-des-objets/

References

[1] https://www.construction21.org/france/articles/fr/le-bim–pourquoi-comment-et-pour-quand.html

https://www.magazine-decideurs.com/news/les-marches-publics-se-mettent-au-bim

https://plan-bim-2022.fr/

[2] https://fr.wikipedia.org/wiki/Building_information_modeling

[3] https://hellofuture.orange.com/fr/au-dela-de-linternet-des-objets-jumeaux-numeriques-et-systemes-cyber-physiques/

[4] https://fr.wikipedia.org/wiki/Ontologie_(informatique)

[5] https://hal-lirmm.ccsd.cnrs.fr/lirmm-01092173/document

[6] http://www.thinginthefuture.com/

[7] https://fr.wikipedia.org/wiki/Industry_Foundation_Classes

[8] https://www.w3.org/TR/wot-thing-description/

[9] https://standards.buildingsmart.org/IFC/RELEASE/IFC2x3/FINAL/HTML/

[10] https://standards.buildingsmart.org/IFC/RELEASE/IFC4/FINAL/HTML/

[11] https://hellofuture.orange.com/fr/le-futur-des-batiments-intelligents-avec-thingin/