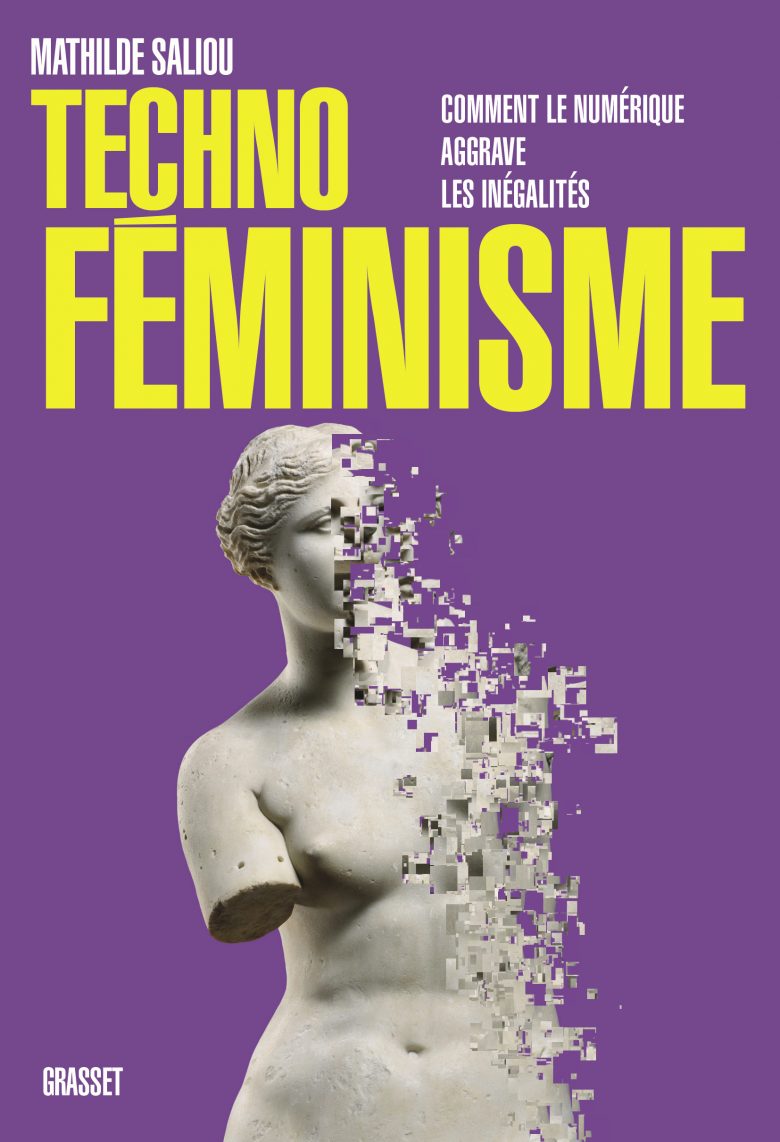

● From making women invisible in the digital world to the ethics of algorithms, this book invites us to look at technology from a new socio-historical angle by going back to the foundations of the mechanisms of discrimination in the digital sector.

● Whether in video games, masculinist forums or amongst business peers, this essay offers a counter-history of the digital world based on the exclusion of women and other minorities.

The potential sexist abuses of artificial intelligence made you want to write this book. Why?

I first became interested in these topics in 2018, when I wondered if artificial intelligence was sexist. In the same year, Joy Buolamwini, a researcher at MIT and founder of the Algorithmic Justice League, published “Gender Shades” with Timnit Gebru. In this research project, they proved that the most commonly used algorithmic models are more effective in recognising the faces of light-skinned men than those of dark-skinned women. We then saw tech giants like Google, Microsoft, and Facebook publish AI ethics charters, undoubtedly as a way to avoid being regulated.

This book is a way for me to provide an angle of analysis and a critique of the digital world from a feminist perspective, regarding both digital practices and the functioning of online services. This is a viewpoint that allows us to discuss issues that are much broader than the place of women in tech, but also disinformation, conspiratorial radicalisation, etc.

You start your essay by discussing online harassment, the masculinist culture in forums and their violence. Why?

There is a discourse about the digital world that suggests that unless users are experts in the subject, they cannot understand the basic functioning of technology. This is not true. So, I wanted to popularise this, and I chose the theme of online harassment to show what women feel when they go online, in the same way that they experience harassment on the streets. If harassment mechanisms are allowed to proliferate online, they end up having offline effects, either because victims of sexist or racist harassment experience very real effects (depressive symptoms, suicidal tendencies in the most serious cases) or because the most radicalised people can go as far as murder in the name of racism or sexism. It is also a political issue, as these dynamics are also used by movements that aim to challenge democratic institutions, as shown by the attack on the Capitol in the United States and the one at the Three Powers Plaza in Brazil.

Why are video games representative of these systemic problems?

In the 1980s, the video game developed by targeting a specific segment: young men in Western countries. It was a way to secure their income. Publishers have developed games for them that represent militarised masculinity, where the player must save his country, a princess, etc.

The good news is that it is possible to break down exclusionary logics. Today, independent publishers are allowing the sector to gain recognition by developing more inclusive scenarios. Video game curator Chloé Desmoineaux has selected some of them.

In the digital sector, we find the same logic of domination, maintenance of inequalities and reproduction as in other industries.

You believe that we must stop distinguishing between the digital world and the real world…

Let us remember that the digital world does not exist outside the patriarchal logic. It is not a separate, more neutral or more objective world. It was built by people who live in a patriarchal society. By collecting our personal data and wishing to personalise our profiles, technology reduces us to simple boxes, but social life does not work like that, since we are each the fruit of multiple origins and learning, and we are constantly evolving.

You write that “entire segments of digital technology have a problem with diversity. They forget about it, do it a disservice, and even attack it”. Is this also the case for companies that provide technology?

In these companies, diversity is needed to ensure that products are better designed and that they meet the needs of all users, both men and women. There is a business argument that if the technologies produced do not work, or do not work well, for half the population, companies risk losing half their customers.

However, in the digital sector, we find the same logic of domination, maintenance of inequalities and reproduction as in other industries. In France, the leaders of technology companies are mainly white men. If we look at the venture capital that funds all of this, the same is true. Beyond the issues of gender or skin colour, there is a problem of social class. They are only people who come from a kind of elite, who have completed training that requires significant financial resources. Currently, they are realising that this over-representation has an impact on the tools they put on the market.

There is a feeling that dialogue is impossible.

There is a need to open the debate about the politics behind technology and how it is used. IT professionals must stop working in isolation. There is a need to reconnect with real life and social life. Regarding artificial intelligence and algorithm development, there is an urgent need to make dialogue possible with end users who are not necessarily aware of the data submitted to these systems. We need to be able to understand their reaction and anticipate unforeseen impacts of the development of technologies.

What other solutions can be devised to calm all this violence?

In my book, I present three simple routes. These are more or less the same as those already found in feminist demands and environmental movements. The first is education: we need to help people understand the main principles of digital technology by breaking down the feeling that technology is alien, since it gives people a sense of being controlled.

The second is based on the creation of links: the Internet is the technology of the link. Some applications have destroyed calm discourse precisely when it is needed for developing conversations by mixing different types of expertise, for example on whether we want certain technologies in our lives. We forget that we have a choice, and it is legitimate to ask whether we want technologies that could be dangerous to human rights.

Finally, we must challenge forms of power and hierarchical schemes often associated with patriarchal logic. Facebook, for example, which is used by more than half of humanity, is controlled by a single person. The law must be capable of changing this, and the Internet has shown that distributed models are possible. After all, the governance of the Internet is itself distributed.